Immersion cooling fluids are taking on more importance as data‑center operators deploy higher‑power artificial intelligence (AI) and high-performance computing (HPC) systems. Rack densities are increasing faster than traditional air systems can accommodate, and liquid cooling is now part of the design discussion for many new builds and retrofits. In that context, fluid properties affect reliability, maintenance, warranties, and long‑term operating cost, not just heat removal.

1) Why demand for immersion cooling is increasing

Heat density is the main driver behind why immersion cooling demand is increasing. New GPU generations used in AI training and inference run at much higher power levels than earlier hardware. Industry discussions around NVIDIA Blackwell and follow‑on platforms that ADI has facilitated routinely reference rack designs well above 50 kW, with some deployments moving past 100 kW. At those levels, air cooling requires very high airflow, fan power, and chiller capacity, which become difficult and expensive to scale.

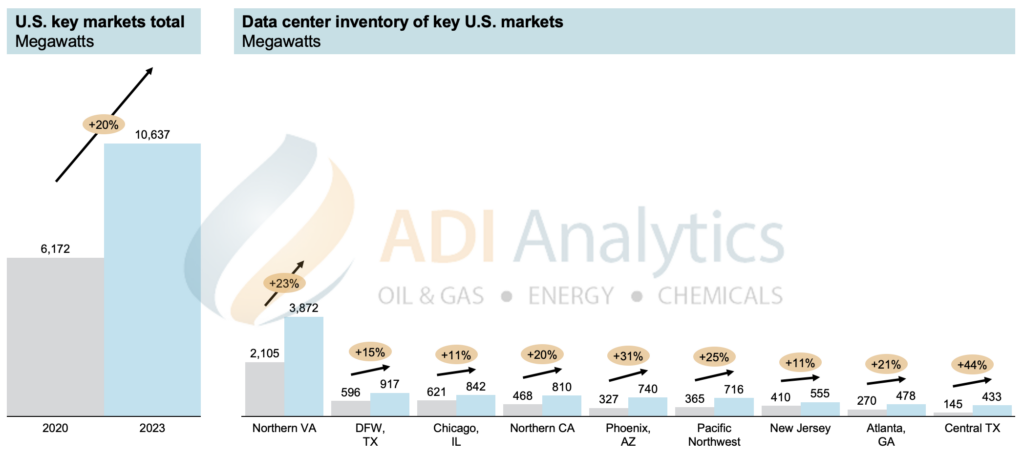

Infrastructure limits add to the pressure. In large U.S. data‑center markets such as Northern Virginia, Atlanta, Phoenix, and Dallas, operators face constraints around power availability, water use, zoning, or all three. Exhibit 1 below shows the growth in key data-center markets in the U.S. during 2020 to 2023.

Exhibit 1. Growth in key data-centers markets in the U.S. during 2020 to 2023 in Megawatts.

ADI’s research points to Northern Virginia alone with more than 47 GW of contracted data‑center capacity. This results in an environment where adding capacity often means densifying existing sites rather than building greenfield facilities. Liquid and immersion cooling are practical tools in that setting.

Reliability considerations also matter. Air systems rely on distributed fans, filters, and moving components across white space and mechanical rooms. Immersion systems simplify parts of the cooling loop by removing server fans and reducing airflow management inside the data hall. For operators running dense, continuous workloads, this changes how thermal risk is managed.

Client projects led by ADI consistently agree that liquid cooling is effective, but debate remains on which projects require it first and the pace of its adoption as standard practice.

2) What the cooling fluid does in an immersion system

Immersion cooling fluids are dielectric liquids. They remove heat while remaining electrically insulating and chemically stable over long operating periods.

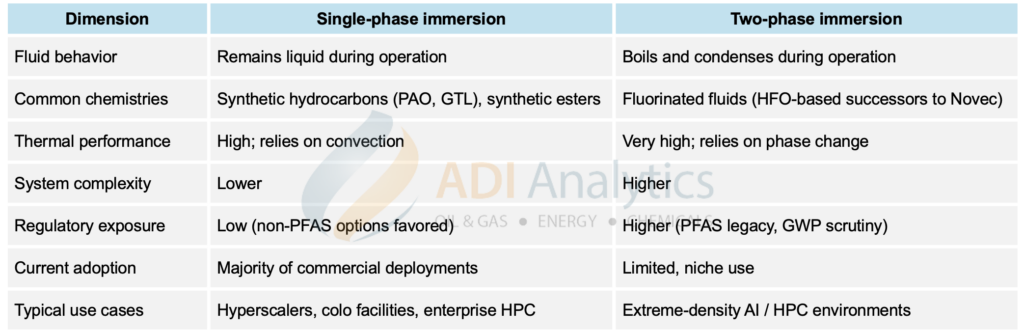

Most current commercial deployments use single‑phase fluids. These remain liquid during operation and circulate through tanks and heat exchangers. Common chemistries include synthetic hydrocarbons such as PAO and gas‑to‑liquid fluids, as well as ester‑based formulations. Operators tend to favor these systems because they are easier to integrate, maintain, and scale. As a result, ADI has supported several producers of these fluids explore and develop data center applications to open new markets and capture emerging demand.

Two‑phase fluids boil and condense during operation, allowing higher heat transfer per unit volume. These systems are typically reserved for very high‑density use cases and introduce additional complexity around chemistry, containment, and regulation.

Exhibit 2 below shows a comparison of single-phase and two-phase immersion cooling fluids. Across both approaches, selection comes down to a small set of practical properties: viscosity, thermal conductivity, dielectric strength, flash point, oxidation stability, and how the fluid interacts with server materials. One recurring point in operator interviews is compatibility with thermal interface materials (TIMs)—the compounds placed between chips and heat spreaders to ensure efficient heat transfer—as well as cables, plastics, seals, and labels.

Exhibit 2. Comparison of single-phase and two-phase immersion cooling fluid approaches.

3) What slows adoption in actual deployments

Approval timelines are a key challenge and are driven by material behavior and operating history.

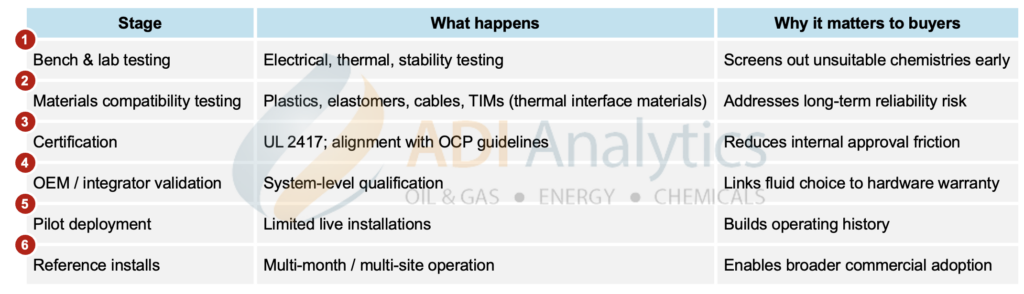

Cooling fluids come into contact with nearly every component inside a server. If a fluid extracts plasticizers from cables, swells elastomer seals, or degrades TIMs, the effect may not be visible immediately. This is why operators and OEMs ask for long‑duration compatibility testing and reference installations, not just lab data. Exhibit 3 below describes a typical immersion cooling fluid qualification path that can be essentially broken down into six stages.

Exhibit 3. Typical immersion cooling fluid qualification path.

Cleanliness is another recurring issue. Moisture and fine particulates can reduce dielectric strength and increase electrical risk. This pushes requirements upstream into blending, filtration, packaging, transport, and on‑site handling. Several hyperscalers and colocation providers interviewed in ADI research highlighted contamination control as a gating issue during internal reviews.

Standards help shorten these discussions. UL 2417 certification and alignment with Open Compute Project immersion guidelines frequently appear as requirements because they give engineering, operations, and risk teams a shared reference point. GPU warranties also influence decision paths, with fluid acceptance often tied to system‑level qualification through server OEMs rather than facility teams alone.

4) What suppliers are expected to support over time

Once immersion systems have been deployed successfully, operators need to have capabilities to support customers and have to look beyond initial fluid fill.

Ongoing sampling, condition monitoring, filtration, and fluid management become part of normal operations. Some suppliers also offer recycling or reconditioning programs to extend fluid life and reduce replacement cost. These services matter because they reduce uncertainty for operations teams and help maintain performance over multi‑year horizons.

From ADI’s perspective supporting clients, this is where entry strategies often need refinement. Companies considering this space need to understand which capabilities customers expect in‑house, which can be outsourced, and how support models affect pricing and margins. In recent client engagements, ADI has spent as much time mapping qualification processes, service expectations, and risk ownership as on market sizing and entry strategies.

Immersion cooling continues to expand because it addresses concrete operating constraints. The associated fluids business sits at the intersection of chemistry, hardware reliability, and data‑center operations. Companies that align their product and service approach with how operators actually assess risk tend to move through adoption cycles more smoothly.

– Uday Turaga

About ADI Analytics

ADI is a prestigious, boutique consulting firm specializing in oil and gas, energy, and chemicals since 2009. We bring deep expertise in a broad range of markets where we support Fortune 500, mid-sized and early-stage companies, and investors with consulting services, research reports, and data and analytics, with the goal of delivering actionable outcomes to help our clients achieve tangible results.

We also host the ADI Forum that brings c-suite executives together for meaningful dialogue and strategic insights across the oil & gas, energy transition, and chemicals value chains. Learn more about the ADI Forum.

Subscribe to our newsletter or contact us to learn more.